Summary

- Data Integrity: towards the introduction of Data Management

- The link between regulation, quality system & Data Integrity

- Migration in the Cloud & Data Integrity

- Implications of calculating the PDE as the exposure limit for the analysis of risks in shared installations

- The IVDR signals the overhaul of the in vitro diagnosis industry

- Tungsten in the production of prefillable syringes. Also possible without tungsten

For several years the Computing Cloud has been the subject of growing interest in all economic sectors and especially in the regulated industrial sectors (pharmaceutical, medical devices…).

Although the economic benefits of such an architecture are highlighted by the main market stakeholders (Amazon Web Services, Microsoft Azure, Google…), industrialists in the healthcare sector should ask questions about the impact of such a change in hardware and software infrastructure on their regulated applications:

what about data localization, the presumed opacity of change management, the confidentiality of the hosted data…? This article aims to present this new paradigm for providing application services with an example of infrastructure migration in a Cloud environment at the level of an international company, while retaining the quality and integrity of the regulated data.

Some definitions

We will start by defining the term “Cloud computing” by repeating the standardized definition of the NIST(2) : “Cloud computing” is a model which allows practical access on demand from any terminal connected to the internet network, to shared configurable computer resources (for example network components, servers, storage, applications and services) which can be supplied rapidly and activated with minimum management effort and without the involvement of the service provider.

This model is composed of five essential characteristics, three service models and four deployment models.

The five essential characteristics

1. Self-service on demand

A customer may have data processing capacity, such as server time and storage capacity, depending on need, without a requirement for human interaction with each service provider.

2. Broad network access

The capacities are available on the internet network and can be accessed via standard mechanisms that favor use by heterogeneous thin or thicker client platforms (for example: mobile telephones, tablets, laptops and workstations).

3. Pooling resources

The computing resources of the provider are pooled to serve several customers using a “multi-tenant” model with different physical and virtual resources allocated dynamically and reassigned according to consumer demand. There is a feeling of independence of localization, in the sense that the customer generally has no control or knowledge of the exact location of the resources supplied but can specify a location at a higher level of abstraction (for example country, state, or data center). The resources available include, for example, storage, processing, memory and network bandwidth.

4. Rapid flexibility

The capacities may be supplied and activated flexibly, in some cases automatically, increasing or decreasing rapidly depending on demand. For the consumer, the capacities available seem often unlimited and may be monopolized in any quantity at any time.

5. Service measurement

Cloud systems control and optimize the use of resources automatically by operating a measurement capacity at a level of abstraction appropriate to the type of service (for example storage, processing, bandwidth and active user accounts). Use of resources may be monitored, controlled and reported, which ensures transparency for the provider.

Service models

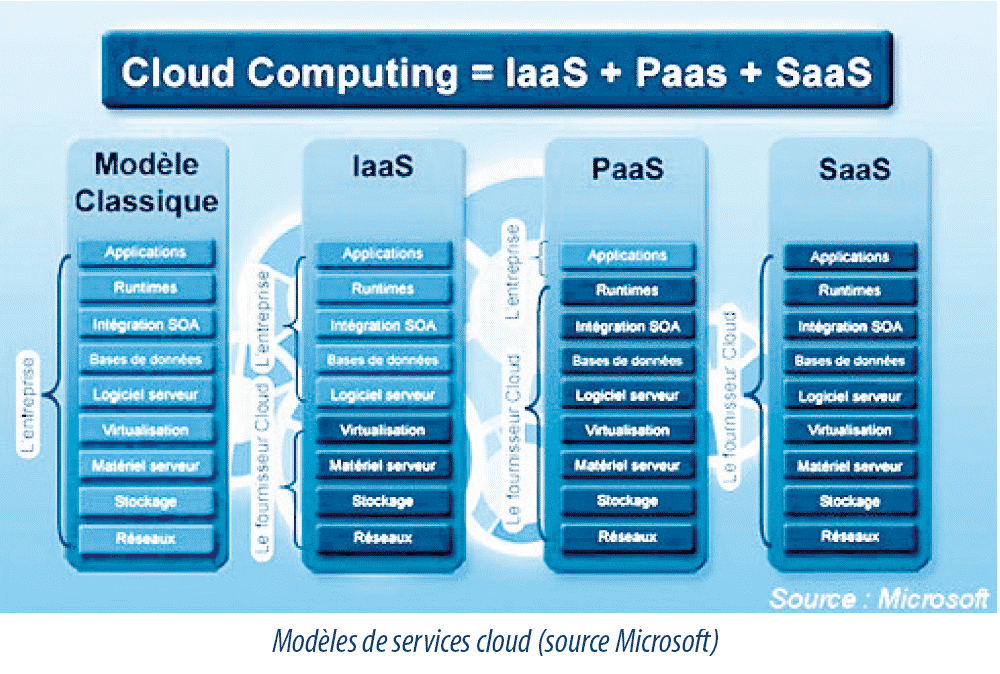

- Software as a Service or SaaS

This model gives the consumer the possibility of using provider applications operating in a Cloud infrastructure. The applications can be accessed by various customer devices by means of a thin client interface such as a Web browser (for example, a Web-based email) or a programmable interface (API). The consumer neither manages nor controls the underlying Cloud infrastructure, including the network, servers, user systems, storage, or even individual applications, with the possible exception of specific application configuration parameters restricted to the user.

- Platform as a Service – PaaS

This model allows the consumer to deploy applications on the Cloud infrastructure created or acquired by the consumer, using programming languages, libraries, services and tools managed by the provider. The consumer neither manages nor controls the underlying Cloud infrastructure, including the network, servers, user systems or storage, but controls the applications deployed and possibly the configuration parameters of the applications hosting environment.

- Infrastructure as a Service – IaaS

This model allows the consumer to supply the processing, storage, networks and other basic IT resources which make it possible for them to deploy and operate a software of their choice, which may include user systems and applications. The consumer neither manages nor controls the underlying Cloud infrastructure but controls the user systems, storage and the applications deployed and has possibly limited control of the network components selected (for example host firewalls).

Modes of deployment

- Private Cloud

In this mode the Cloud infrastructure is provided for the exclusive use of a single organization comprising several consumers (for example commercial units). It may be held, managed and operated by the organization, a third party or a combination of these, and it may exist inside or outside the premises of the organization.

- Community Cloud

The Cloud infrastructure is implemented for the exclusive use of a specific community of consumers coming from organizations with common concerns (for example, the task, safety requirements, regulatory rules and constraints). It may be held, managed and operated by one or more organizations of the community, a third party or a combination of these, and it may exist in their own premises or externally. For example, air transport companies have implemented this type of deployment to facilitate the numerous exchanges between them (reservations, cross invoicing…).

- Public Cloud

Cloud infrastructure is supplied for open use by the general public. It may be held, managed and operated by a commercial, university or governmental body, or a combination of these. It exists in the premises of the Cloud provider.

- Hybrid Cloud

The Cloud infrastructure is an assembly of two or more distinct Cloud infrastructures (private, community, or public) which remain single entities, but are linked by a standardized or proprietary technology which allows portability of data and applications (for example load balancing between distributed servers).

The main authorities

The main players in the Cloud market are confronted with numerous and varied requirements given the different professions and fields that they serve.

They must thus adopt the strictest quality requirements to satisfy the level of security and confidentiality required by the most demanding sectors (banking, health, defense…). Most of them are certified in relation to the main authorities in these different areas:

- ISO 27001:2013

International standard for information security management systems, published in October 2005 and revised in 2013. This is the most widespread security standard which specifies requirements relative to the establishment, implementation, updating, and continuous improvement of an information security management system within an organization.

- SOC 1, 2, 3

Service Organization Control Reports (SOC) are prepared by an auditor in compliance with American Institute of Certified Public Accountants (AICPA) standards and are specifically intended to evaluate the means of control of a service organization around 5 confidence factors: security, availability, processing and data integrity, confidentiality and respect for private data.

- PCI-DSS

The PCI DSS standard is established by the suppliers of payment cards and is managed by the PCI Security Standards Council (open international forum for the improvement, circulation, and implementation of security standards for the protection of accounts data). This standard was created in order to increase control of card holder information with the aim of reducing fraudulent use of payment instruments.

- HIPAA

The second section of the Health Insurance Portability and Accountability Act (HIPAA) defines the American standards for electronic management of sickness insurance, the transmission of electronic treatment forms and all user IDs necessary for the dematerialization program for sickness insurance treatment forms.

- GDPR (Global Data Privacy Regulation)

Applicable from the 25 May 2018, the European Regulation on data protection imposes specific obligations on subcontractors who may be held liable in the event of breach. These obligations affect all bodies that process personal data on behalf of another body, as part of a service or service provision such as computer services providers (hosting, maintenance.). The subcontractors are bound to comply with specific obligations regarding security, confidentiality and documentation of their activity. They must take account of data protection from the design stage of the service or product and by default must put in place measures that ensure optimal protection for data.

The majority of these certification authorities are not specific to the pharmaceutical industry but contain the majority of requirements relative to data security, namely:

- Integrity. The data must be what they are expected to be and must not be corrupted accidentally or intentionally.

- Confidentiality. Only authorized persons have access to the information intended for them. Any unwanted access must be prevented.

- Availability. The system must operate faultlessly during the planned usage slots, and guarantee access to the installed services and resources within the expected response time.

But also relative to:

- Non-repudiation and imputation. No user must be able to dispute the operations they have performed as part of their authorized activities and no third party must be able to allocate activities of another user to themselves.

- Authentication.Identification of users is crucial for managing access to relevant workspaces and maintaining confidence in interactive relationships.

As regards regulatory texts more specifically connected to the pharmaceutical industry, references to “Cloud computing” can be found in the following reference documents:

- 21 CFR Part 11(3)

the open system concept, which is defined as an environment in which access to the system is not controlled by the persons responsible for the content of the electronic records contained in this system. For these systems, 21 CFR Part 11 recommends in article 11.30 that “Persons who use open systems to create, modify, maintain or send electronic records must use the control procedures and methods designed to guarantee the authenticity, integrity and, where applicable, the confidentiality of the electronic documents, from the moment they are created to the moment they are received. These control procedures and methods include those identified in paragraph 11.10, and, where applicable, additional methods such as the encryption of documents and the use of electronic signature standards to guarantee, depending on circumstances, the authenticity, integrity and confidentiality of the records”.

- WHO(4)

In the context of subcontracting agreements, the WHO guide emphasizes the necessity that “the responsibilities of the order giver and acceptor defined in a contract as described in the WHO guidelines completely cover the data integrity processes of the two parties related to the outsourced work or the services provided… These responsibilities extend to all suppliers of IT services, data centers, and database and IT systems maintenance staff under contract, as well as suppliers of “Cloud computing” solutions… Staff who periodically audit and assess the competence of a body or service supplier under contract should have the appropriate knowledge, qualifications, experience and training to assess data integrity governance systems and to detect problems of validity. The assessment, frequency and approach of the monitoring or periodic assessment of the contract acceptor must be based on a documented risk assessment which includes an assessment of data processes. Finally, this document emphasizes the importance that “the data integrity control strategies provided for should be included in quality agreements and written contractual and technical provisions, where applicable, between the order giver and the contract acceptor. These should include provisions allowing the order giver to access all the data held by the organization under contract related to the product or the service of the order giver, as well as all the relevant quality system records. This should include access for the order giver to electronic files including audit trails, held in the organization’s computerized systems, as well as all printed reports and other relevant paper or electronic documents.

When data and document storage is entrusted to a third party, particular attention should be paid to understanding the issues of ownership and recovery of the data held in connection with this arrangement. The physical site where the data is stored, including the impact of the laws applicable to this geographical location, should also be taken into account. Agreements and contracts should lay down mutually agreed consequences if the contract acceptor refuses or limits access by the order giver to the records held by the contract acceptor. When databases are outsourced, the order giver must ensure that the subcontractors, in particular the providers of Cloud services, are included in the quality agreement and correctly trained in record and data management. Their activities should be regularly monitored on the basis of a risk assessment”.

- US FDA(5)

Interesting recommendations on the use of electronic records and signatures in clinical studies and more particularly on the use of application services in the Cloud are proposed:

An infrastructure migration project in the Cloud at international company level

In June 2017, Baxter Healthcare initiated a migration project from its IT servers to a Cloud-based architecture, which represented around 450 servers and a portfolio of 20 GxP applications and 80 non-GxP applications: finance, sales…

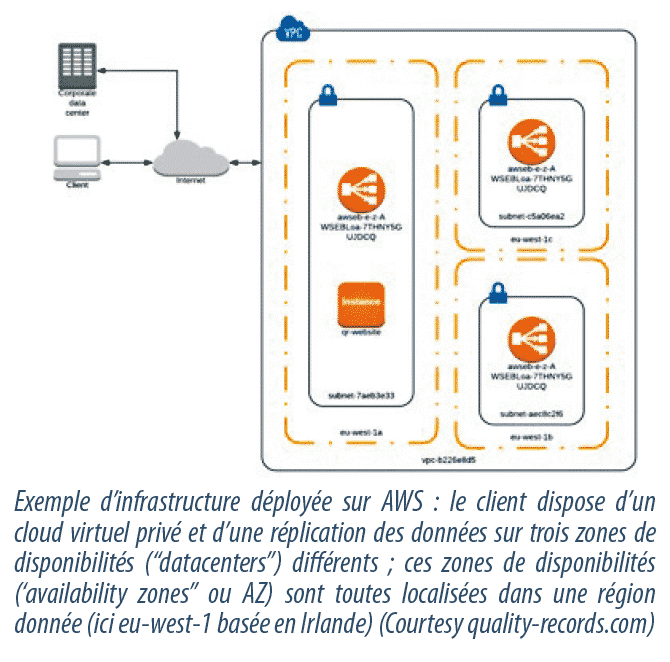

The preparation stage lasted 6 months finishing at the end of December 2017 with into the bargain the choice of the service provider Amazon Web Services (AWS), the market leader and supplier which met the call for tender criteria and had the quality certifications required for such a project.

During this preparation stage and given the large number of servers to be migrated, a preliminary assessment allowed us to classify the systems and determine the possible migration options in accordance with the following possibilities:

- Decommissioning. The application is at the end of life and can be stopped without difficulty for business. Users are informed of this and the infrastructure will be decommissioned in accordance with a pre-established procedure. For each application identified as GxP, a decommissioning plan will be produced with archiving of the GxP records required for the legal retention period.

- Rationalization. It is possible to combine several instances of the same application in order to reduce the size of the instance. This pooling effort must be carried out in accordance with the modifications management procedure with great care paid to data confidentiality.

- Simple hosting. The application can be hosted in a Cloud infrastructure with a minimum of change. The modifications management process for applications and infrastructure will be applied during the move to the future Data Center.

- Cloud conversion. The application must be updated in order to be hosted in the Cloud. A project is initiated in accordance with the change management procedure for applications incorporating the necessary specification and design stages, as well as the ensuing validation stages.

An approach focused on data security and integrity

In the case of systems decommissioning, the two following options are practiced:

- The database is kept as read-only, and it is possible to access regulated data via reports or validated requests.

- The application is kept on a virtual server in order to retain access to the data via the application; this virtual machine which is activated solely on request in the event of an audit or an inspection must be given particular attention (link between the database and the application, specific IP name or address…). This method of storage can be used in the majority of cases with the exception of incompatibilities with operating systems.

For each system/application concerned by the project identified in the configuration management database (CMDB), a Quality risk analysis is performed to determine the degree of validation required for the change of infrastructure. A specific regulatory risk analysis is conducted to assess the impact and define any specific measures to guarantee data integrity as well as a security risk analysis which will define security provisions in the Cloud (private virtual Cloud, multi-factor authentications, configuration of the virtual machine (AMI)…).

All of these analyses give rise to a report which will consolidate the results and risk-control methods for each system concerned.

The project process can then go ahead with the writing of the specifications for the future system (functional and technical specifications) which will enable identification of the migration tool to be implemented.

The development environment is created on the Cloud platform and, for applications requiring reconstruction, the secure application code in a source code manager is modified in accordance with the approved specifications. Important tests are carried out on this environment to avoid the appearance of irregularities in the validation phase. Once the dry run tests have been completed and proved conclusive, a change request is initialized and pre-approved after verifying the identification and specifications of the application, the risk analysis report and the construction specifications for the qualification environment which must be identical to that verified in the development environment.

The qualification environment is constructed in accordance with the approved specifications and the application is qualified for basic functionalities and critical functions: communication, interfaces, printing, database access…). The tests are recorded, and any errors are analyzed, and, after correction, the tests are re-run in order to check the effectiveness of the corrective action.

The change management records are updated with the references of the tests carried out and the risk analysis report is approved with approval of the change.

The production environment can then be constructed, and specific checks may be carried out if necessary. A monitoring phase of the application in production is set up and the change record is given final approval (closure).

Key points

Each critical stage of the project is subject to strict management of review and approval. Transitions from development instances to verification instances (QA) and then from migration instances to production instances are submitted for the approval of a CAB (Change Advisory Board).

The change management process is at the heart of the project. A change is initiated for each database migration and for each environment taking care to separate the verification environments (Quality) from the production environment. This must be part of a backup plan (rollback possible) and be approved by the BPO (Business Process Owner), the CSO (Chief Security Officer) and the QSR (Quality System Representative).

Once the migration has been carried out, an integrity verification report for the migrated data is attached to the change request.

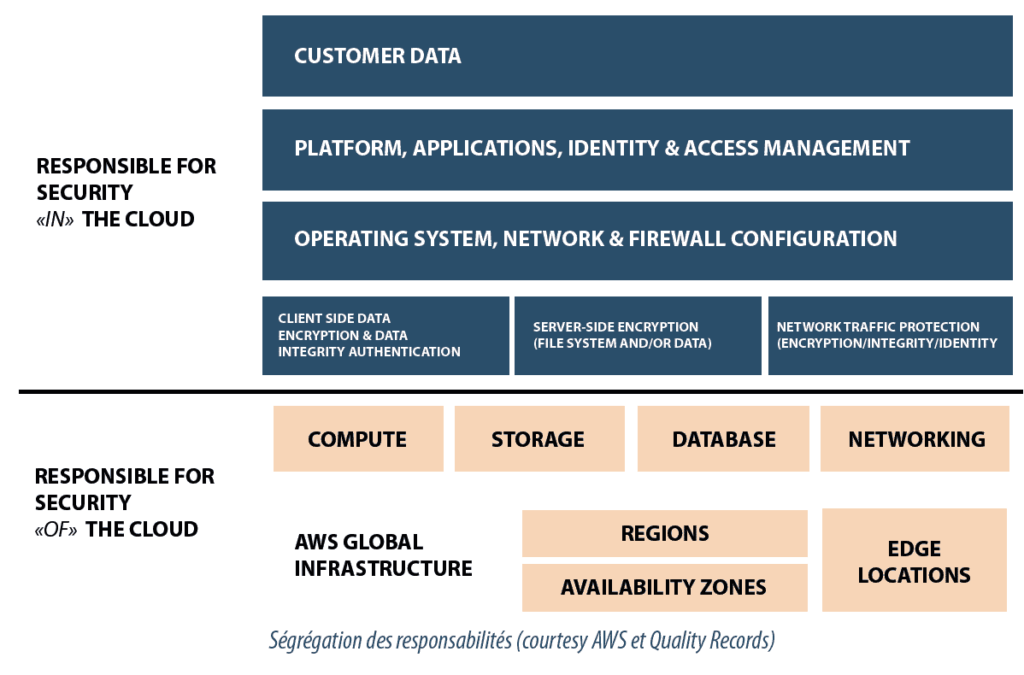

The automation of certain phases results in a noticeable time-saving in implementation. Testing, deviation management and change management are managed through dedicated applications. The infrastructure proposed by Amazon allows segregation of responsibilities:

- Maintenance of the underlying Cloud infrastructure is carried out by Amazon (AWS), which has the required technical resources, staff and quality certifications; AWS is responsible for protecting the infrastructure which performs all the services offered in the Cloud. This infrastructure is made up of the hardware, software, the network and installations carrying out the AWS Cloud services.

- Maintenance in the Cloud is Baxter’s responsibility without any change relative to the previous infrastructure supplier; Baxter remains in charge of maintenance and security with regard to operating systems, applications and security (antivirus…). Although Baxter is deploying an Amazon EC2 instance, it is responsible for management of the guest operating system (including updating and security patches), software, applications and utilities installed by the customer on the instance(s), and the configuration of the firewall supplied by AWS (security group) for each instance.

So, no access by AWS personnel is possible to the application infrastructure and the data deployed by Baxter thus preserves their integrity and security. The data location is guaranteed and change management is shared.

For example:

- Patch management

AWS is responsible for the correction of faults linked to the infrastructure, but Baxter is responsible for the correction of their operating system(s) and application(s).

- Configuration management

AWS maintains the configuration of its infrastructure, but Baxter must configure its own operating systems, databases and guest applications.

- Knowledge and training

AWS trains its employees and Baxter is responsible for training its own employees.

In conclusion

Although it is difficult to anticipate short-term financial benefits, savings should be expected in the medium-term (3-5 years) as Baxter no longer has to cope with the obsolescence of network components and IT equipment. In addition, the gradual optimization of the solutions available on this platform will enable noticeable savings to be made on license costs. This project, currently being finalized, demonstrates the capacity and feasibility of migration to a Cloud architecture at international company level while ensuring the integrity of the data and applications transferred.

Share article

Jean-Louis JOUVE – COETIC

jean-louis.jouve@coetic.com

Gregory FRANCKX – BAXTER

gregory_franckx@baxter.com

Jean-Sébastien DUFRASNE – BAXTER

During Twenty three years, Jean-Sébastien has confirmed his leadership and deep competence by multiplying successful experiences in the fields of medical devices and Hospital-Renal Products (Baxter), in Biological company (GSK Vaccines), Chemical manufacturing and banking industries. Jean-Sébastien is recognized at Baxter as a top talent and as a company expert in Computerized system and Data Integrity.